Explore the SaMD Verification and Validation Process

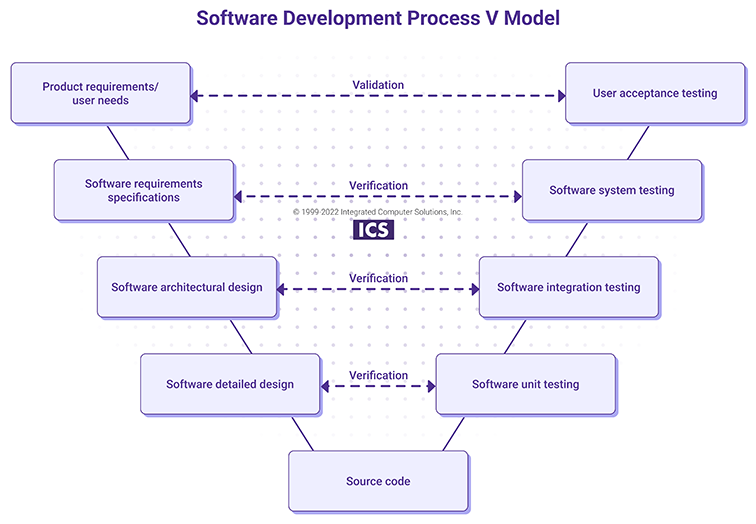

The software-as-a-medical-device (SaMD) lifecycle encompasses two important and often confusing processes: verification and validation. Verification comes before validation and provides the evidence that the specified requirements have been met and that design outputs match design inputs. Validation ensures that the medical device meets the user needs and intended use.

Both processes should be carried out at various stages of the software-as-a-medical-device (SaMD) development process in order to make sure that the delivered product is safe and effective. Here’s an overview of the SaMD verification and validation processes.

Verification Process

Verification ensures that the delivered software is of high quality and meets the specifications. This is achieved through implementation of means to reduce errors in the code, increase the code quality, and verify specified requirements throughout the SaMD development process. Verification activities include not only different testing types, but also static and dynamic analysis, code reviews and document inspections.

Code reviews

Once a part of the code is written, it is a common approach in the software industry to perform code review, which is the evaluation of the uncompiled code by people who were not involved in writing it. This also serves as one of the verification activities.

Code reviews are usually performed by inspections (the reviewers receive the code and criteria in advance) or walk-through (author presents the code to the group).The type of code review typically depends on risk. For instance, a walk-through may be applied for any code that mitigates a serious risk as identified in the dFMEA.

It’s crucial to determine the criteria for the evaluation and areas that will be evaluated during the code review. These can include following coding style and best practices, the level of source code comments, potential bugs, portability issues, security concerns, unit tests coverage, and compliance with the detailed design specifications.

Even though they are not required in any standard, code reviews are considered software development best practices. Most medical device companies have coding standards as part of their SOP.

Static and dynamic code analysis

Static code analysis is a method of debugging before a program is run whereas dynamic code analysis verifies code behavior during execution. During static code analysis, the code is analyzed against a set of coding rules and standards that addresses elements like formatting, naming conventions and documentation. It’s usually performed after code implementation and before unit/integration/system testing so it enables early detection of common errors and poor programming practices.

Taking place during program execution, dynamic code analysis helps to identify performance problems, memory issues, other bugs and app crashes. Plus, it minimizes the production errors. Both static and dynamic code analysis are performed using tools. The choice of the tools depends mainly on the programming language and coding standards supported by the tool.

Testing

There are plenty of testing types that can be used in the SaMD verification process. The utilized methods depend on the software nature and scope of the project. Considering testing level they can be divided into Unit, Integration and System tests.

Unit tests

The first level of software testing, unit testing encompasses verification of individual software units or components (an individual function, method, module, or object). White box testing is the method utilized in most unit tests. In this technique the internal structure, design and coding of the item is known. Developers can write unit tests before or after coding of the software unit. A good practice is to utilize tools to develop automated test cases (e.g. JUnit, NUnit, Google framework) that can be executed as part of the Continuous Integration (CI) process. The results of unit testing should be part of the verification documentation.

According to the standard for medical device software lifecycle processes (IEC 62304), software unit verification is required for software classes B and C. However, it is recommended that unit testing is performed for all SaMDs as it helps detect bugs early in the development and in turn, saves costs.

Integration testing

The next level of software testing is integration testing, which aims to verify interfaces and data flow between integrated software units or components. This type of testing is usually done manually based on the Integration Test plan, which defines testing strategy (e.g. big bang, top-down approach, bottom-up approach). Results of such testing should be documented.

As with the unit tests, integration testing is only required for software classes B and C per IEC 62304. The common approach for SaMD is to combine integration testing with the system testing.

System testing

The completed software product is verified with system testing, which uses different methods and tools to evaluate all specified functionalities and the way all software components interact as a whole. System testing usually encompasses black box testing, which focuses on software input and output and tests software functionalities without having knowledge of internal code structure. System testing may be performed manually or automatically, and may include both functional and non-functional (performance, usability, reliability, security) tests.

According to the 62304 standard, system testing is required for all software safety classes (A, B, C). A detailed test plan, test protocols and reports should be developed and performed. Some types of system testing (e.g. usability testing) may be also considered as a validation activity for standalone software.

Validation Process

The validation process aims to ensure that the SaMD meets user needs. This is achieved through various activities such as clinical evaluation or clinical trials, analysis and inspection methods. User tests also play an important role in the validation process.

The purpose of user testing (also known as user acceptance testing, user site testing, beta testing or summative testing) is to verify the software product with the end users. It’s crucial to include all relevant user profiles (i.e. user characteristics, age, gender, knowledge) and perform tests in the actual or simulated environment. The tested software should be a SaMD release candidate.

The V Model shows how described verification and validation activities may be implemented at all levels of the software development lifecycle.

How Much V&V Effort is Enough?

There’s no simple answer to this question. Verification and validation efforts should be proportionate to the level of risk posed by the software. Therefore the amount and type of applicable testing, as well as other verification and validation activities, depends on the software safety classification and risk analysis.

The code coverage may serve as a confirmation that software was thoroughly tested. The level of code coverage is not specified in the standards, however it’s recommended to apply a risk-based approach and include it in the verification test plan. Eighty-percent is usually a good number to shoot for. Attempting to go higher may be costly and the added effort doesn't always provide any real benefit.

Summary

The manufacturer should provide due diligence to ensure that the software is safe, effective and meets all requirements and user needs. This is achieved mainly by verification and validation activities that are carried out throughout the software development lifecycle.

For more on SaMD, download our on-demand webinar 5 Key Considerations at the Start of SaMD Development. We cover important aspects you should address at the outset of the SaMD development process to keep your project running smoothly, including regulatory concerns and technology considerations.