Accelerate Development of Your Digital Twin Training Simulator

Right before the holidays, I had the opportunity to attend the annual Interservice/Industry Training, Simulation and Education Conference (I/ITSEC) tradeshow – the world's largest modeling, simulation and training event that presents the latest tools and techniques for building training and simulation systems.

While the show focuses on military systems, the Healthcare Pavillion in the exhibit hall brings together companies with a footprint in the medtech field. There I discovered that the technique we use here at ICS to rapidly develop medical devices can be applied to the creation of VR-based digital twins for training systems based on any existing device.

Rapid Development Tool

For the past few years the team at ICS has been using our rapid development approach to accelerate the development of new devices. In a macro sense, here’s how it works: we start with the user experience (UX) design of the device, using Figma to capture the design.

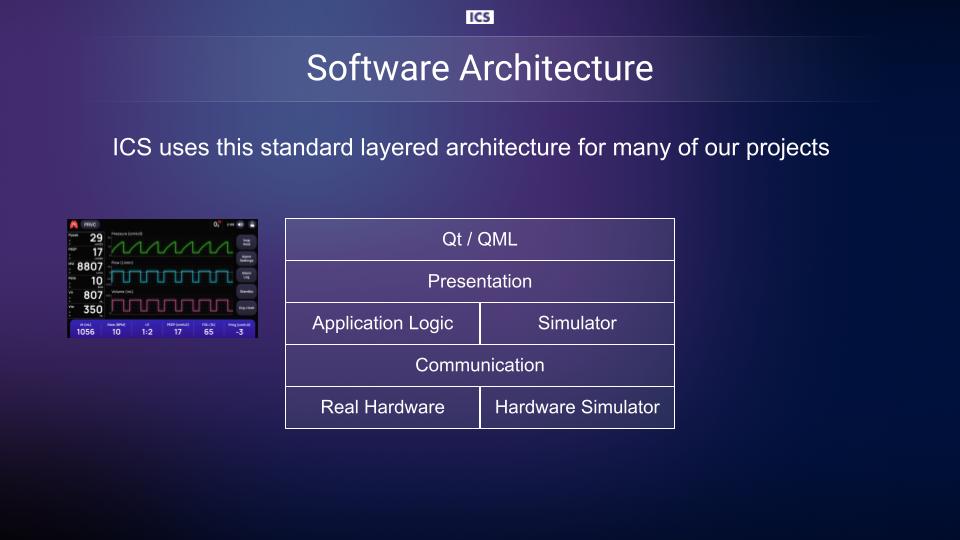

We then directly import the design into our rapid development tool to create a working version of the UI, which is built on our layered architecture and the Qt toolkit. From there, our software engineers quickly build out the rest of the software needed to create a working device – often in about half the time it would take to write it all from scratch.

But speed is not the only advantage of our approach. Another benefit of this technique is that the standardized architecture allows us to easily create simulators that are used during development. For instance, when developing for embedded devices, it is a common occurrence for the software team to run ahead of hardware development.

This can create some critical path issues with the overall schedule. We have mitigated this concern with an architecture that lets us use a simulator with a standard API to replace that hardware dependency. The application logic layer also can be simulated, permitting a UI team to keep developing while the algorithm team is working out some of the more complex parts of the system.

Building Training Systems

How does this approach help in the creation of training systems? The technique starts with the output of the UX team, which can be a representation of an existing system instead of a new design. This means that the UX team can review the screens on an existing system and create a version of them in Figma, a process that can often be done quickly.

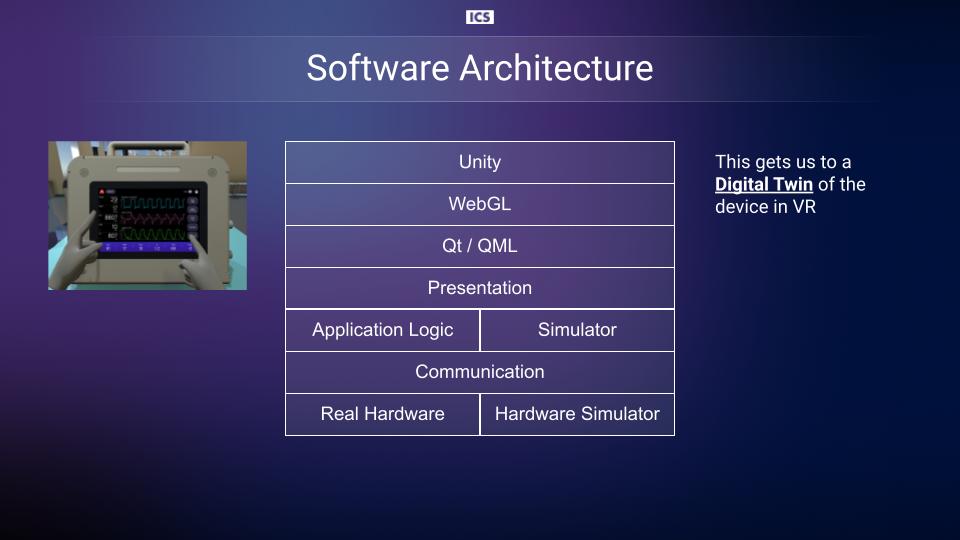

Once the Figma version exists, the rapid development pipeline described above can be applied – and that provides a version of the device that can respond to simulators and scripting. While the system is technically usable at this point, multiple studies have shown that training conducted using virtual or augmented reality has a much higher rate of efficacy, so our next step is to create the VR version of the system.

This is where our rapid development tool and the Qt toolkit again play an important role. A simple recompile into WebGL delivers a version that can run seamlessly in Unity, the world’s leading platform for creating and operating interactive, real-time 3D content.

We now have a VR-based digital twin of the UI-based portion of any software system. A user can interact with the twin, with scenarios and responses provided by the simulators and dynamic scripts. Attaching to the simulators with any existing or custom training dashboard now becomes a straightforward activity.

This technique is exceptionally promising as it offers virtually limitless possibilities for creating training systems. Get in touch if you’d like to learn more about creating your own digital twin training simulator.